Status Checks

HTTP and HTTPs checks

The http and https check verifies that the LiquidFiles system can connect externally using unrestricted http and https. This is required to download updates to the system, download av updates, perform geolocation checks and so on. At some point there may be support for outgoing proxy support but for now unrestricted access is required.

You can run the tests manually if you want. We're expecting "pong" responses like this

root@host ~ % curl -sm 15 http://liquidfiles.s3.amazonaws.com/ping pong root@host ~ % curl -sm 15 https://liquidfiles.s3.amazonaws.com/ping pong

If anything is blocking or trying to proxy the connection, this will fail.

If you know your way around curl, http and html, you might have picked up that this doesn't conform to the html standard and you're correct. And there's a simple reason for this is that the test is trying to see if the connection is permitted with no restrictions. As you can also see, the response payload is the 4 bytes p, o, n and g, plus the http and https header. Regardless of network architecture or anything that goes on, the response will always fit in a single tcp packet.

TCP Quality check

This check came to be after a customer had installed the system on a network where something was very wrong and they kept claiming something was wrong with the update mechanism when in fact something was broken in their environment.

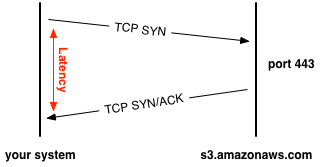

The theory of the test is quite simple. Consider the following diagram.

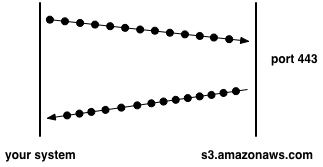

We're sending a TCP SYN packet (normal TCP initation packet) and waiting for the TCP SYN/ACK packet in response, to the amazon S3 cloud on port 443. The latency is the time it takes to get the packet back. In order to test quality though, we want to send more packets so that we can start to measure how many packets gets lost along the way. In this test, we're sending 100 packets, like this:

We're sending 10 packets/s so it will take 10s to send 100 packets. We're then simply calculating how many of the TCP SYN/ACK packets return and based on that we know how many packets got lost/discarded.

A couple of pointers

- We are expecting somepacket loss. IP is a lossy protocol, that's why we have TCP to take care or retransmits when packets gets lost. (If you believe that 0% packet loss would be a good thing, please do some research on token ring vs ethernet, or x.25 vs frame relay. Using lossless protocols (token ring, x.25, ...) have always yielded horrible performance and we've always selected the lossy protocols (ethernet, frame relay, ...) over them and let TCP handle retransmits of the few packets that needs to be retransmitted).

- If we've lost more than 5 packets (5% packet loss), the TCP quality check will be marked as red.

- If you're worried about the data we're sending, each packet is exactly 40 bytes so 100 packets is 4000 bytes, about the size of a favicon, or a page full of text with no images.

- This test only runs when you visit the Status page, it doesn't run periodically at any other time.

If you get more than 5% packet loss, there are many reasons why this happens, including

- Network congestion, at any point between your system and s3.amazonaws.com, including your own network, your ISP's network and connections between your ISP and the nearest s3.amazonaws.com server farm.

- Faulty hardware - cables, switching, nics, ....

- Faulty drivers or software.

- Firewall, IPS or QoS device rate-limiting the connection.

- Firewall or IPS blocking the connection. If you've been around Internet security for a long time, you probably recognize this traffic pattern as a potential SYN-flood attack, albeit in reverse, and if your firewall is set to protect against SYN flood attacks for a system 10+ years old, it may block the connection. This is not a concern for modern systems, and firewalls protecting modern systems.

If you want to run this test manually, it uses the hping tool. Please run the following command on the LiquidFiles system or anywhere else you have hping installed:

root@host ~ % hping --fast -c 100 -S -p 443 -q s3.amazonaws.com HPING s3.amazonaws.com (en1 207.171.189.80): S set, 40 headers + 0 data bytes --- s3.amazonaws.com hping statistic --- 100 packets tramitted, 100 packets received, 0% packet loss round-trip min/avg/max = 328.4/525.3/664.7 ms

As you can see from this results, we had 0% packet loss and about 525ms average roundtrip time. Fairly typical result from Australia, slow but dependable .

Ok, I get packet loss - how do I fix it?

Since there are many reasons why you get packet loss, and not all of them are going to be in your control, it's not a one-line answer. The general rule of thumb is that the higher percentage packet loss you get, the higher the likelyhood of hardware problem. A general rule of thumb would be:

- 100% packet loss - something blocking completely (firewall, ips, faulty network configuration....).

- 30% - 99% packet loss - hardware failure.

- 6% - 29% packet loss - network congestion.

- 0%-5% packet loss - normal network behaviour, the longer the distance, the higher the expected packet loss.

If you have a hardware failure, this can either be on the local system or a network device in the path.

If you have network congestion, it can be at any point between the LiquidFiles system and s3.amazonaws.com, including your switching, firewalls, internal networks, your ISPs networking, your ISPs or your country's international network links and Amazons infrastructure and ISPs.

The way to get to the bottom of where the problem lies in both the hardware or network congestion problem would be to run the hping command listed above at various points towards s3.amazonaws.com. If you get same packet loss from anywhere, likelyhood is that the problem lies in your network or with the hardware of the system. You can then test to run hping on another system to see if it's your network or this particular system. If it's fine on your network but you get packet loss on any remote network, your link to your ISP is likely congested, or it could potentially be your firewall or edge router causing the problem. If you start to see the packet loss only when leaving your country, the problem may only be fixed by switching ISPs, or it may be out of your control completely, depending on where in the world you live (if your ISPs share international links). It basically comes down to going step by step to find out where the problem starts and fixing the problem there.